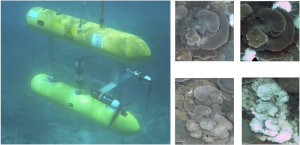

The ACFR Marine Robotics group undertakes fundamental and applied research in the areas of sensing, navigation and mapping, classification and clustering, planning and systems design.

Navigation and Mapping

Much of our research is focused on improved methods for navigation and mapping for Autonomous Underwater Vehicle systems. We have developed an extensive set of tools for Simultaneous Localisation and Mapping based on high resolution stereo vision information collected by our AUVs. This has allowed us to generate detailed three dimensional seafloor models by combining tens of thousands of images and exploiting the known structure derived from the stereo imagery. We have also developed methods for Bathymetric SLAM and exploiting Acoustic Doppler Current (ADCP) observations to improve the quality of navigation in the mid-water column.

Clustering in Large Image Archives

The use of robots for scientific mapping and exploration can result in large, rapidly growing data sets that make complete analysis by humans infeasible. This situation highlights the need for automated means of converting raw data into scientifically relevant information. We have developed Bayesian clustering models for the labeling of large quantities of seafloor imagery in an unsupervised manner. This approach has the attractive property that it does not require knowledge of the number of clusters a-priori, which enables truly autonomous sensor data abstraction. The underlying data representation is also learned using unsupervised feature learning techniques. This approach consistently produces easily recognisable clusters that approximately correspond to different habitat types. These clusters are useful in observing spatial patterns, focusing expert analysis on subsets of seafloor imagery, aiding mission planning, and potentially informing real time adaptive sampling.

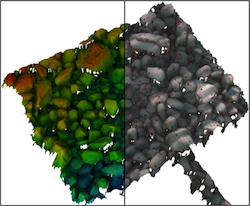

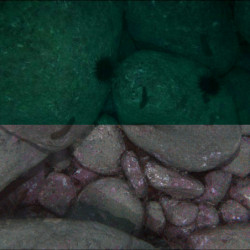

Underwater Image Colour Correction

When capturing images underwater, the water column imposes several effects on images that are negligible in air such as colour-dependant attenuation and lighting patterns. These effects cause problems for human interpretation of images and also confound computer-based techniques for clustering and classification. Our approach exploits the 3D structure of the scene generated using structure-from-motion and photogrammetry techniques accounting for distance-based attenuation, vignetting and lighting pattern, and improves the consistency of photo-textured 3D models.

When capturing images underwater, the water column imposes several effects on images that are negligible in air such as colour-dependant attenuation and lighting patterns. These effects cause problems for human interpretation of images and also confound computer-based techniques for clustering and classification. Our approach exploits the 3D structure of the scene generated using structure-from-motion and photogrammetry techniques accounting for distance-based attenuation, vignetting and lighting pattern, and improves the consistency of photo-textured 3D models.

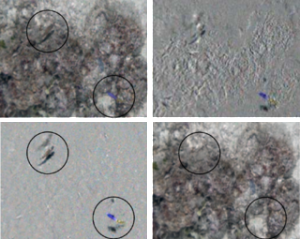

Plenoptic Imaging

We have also been investigating the use of light-field, or plenoptic, imaging systems to mitigate the impact of backscatter as well as providing novel mechanisms for detecting changes and calculating optical flow in underwater scenes. Plenoptic cameras gather more light than conventional cameras and capture a rich light field structure that encodes both textural and geometric information. This work is focused on developing novel ways of exploiting plenoptic imagery, from mitigating the effects of murky and silty water to closed-form visual odometry and change detection from a moving platform.

Automated image analysis

Typically less than 1 − 2 % of the collected images from benthic surveys end up being annotated and processed for science purposes, and usually only a subset of pixels within each image are scored. This results in a tiny fraction of total amount of collected data being utilised, O(0.00001%). We have a number of research projects targeted at leveraging these sparse, human-annotated point labels to train Machine Learning algorithms, in order to assist with data analysis.

Autonomous Repeatable Surveying

This project aims to develop algorithms and methods required to enable robotic platforms to perform repeatable, high-resolution surveys of marine habitats. Current research is focussed on developments in data processing of multi-year repeat survey imagery and other map data for precision automatic registration and change detection.

This project aims to develop algorithms and methods required to enable robotic platforms to perform repeatable, high-resolution surveys of marine habitats. Current research is focussed on developments in data processing of multi-year repeat survey imagery and other map data for precision automatic registration and change detection.

Managing Large Geospatial Image Archives

Check out our Squidle online interface that manages the millions of seafloor images in our online archive. This site also provides tools for selecting subsets of images to be annotated by expert users.